| HOME | ABOUT | PEOPLE | PROJECTS | PUBLICATIONS | LINKS | (WIKI) |

|

Recognizing Fish in Underwater Video

Overview

Biologists

Underwater video [mov,wmv] from Shark Bay, Australia. Courtesy Dr. Larry Dill, SFU Behavioural Ecology Research Group.

studying underwater habitats are interested in knowing the number and type of fish present in a local body of water. For example, tallying the number of different species that enter or leave an area can shed light on food availability or the presence of predators. Placing underwater video cameras on the sea bottom for hours at a time is one non-intrusive method of counting fish for these types of studies.

However, a disadvantage of using underwater video for fish censuses is that it is tedious for people to analyze the footage — the cameras record for long periods and there may be boring, uneventful gaps between fish sightings.

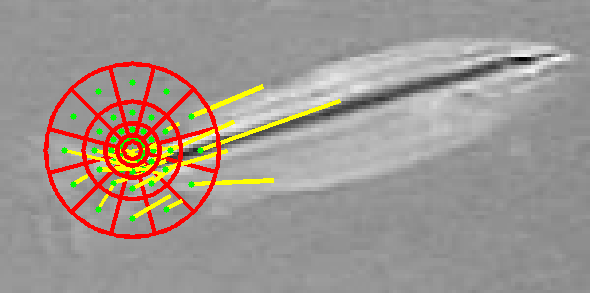

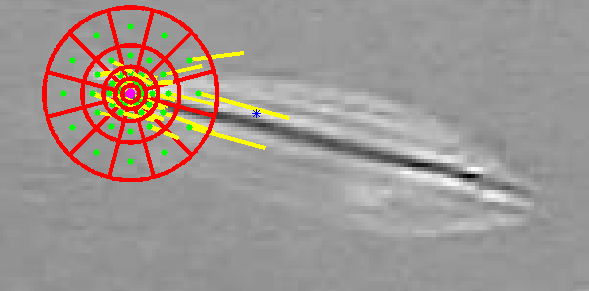

Our research aims to address this problem by automating the analysis of underwater fish census video. Shape contexts are used to propose correspondences between hypothesized fish images cropped from frames of underwater video and a set of known examples of different fish species. Distance transforms and dynamic programming on a tree structure make it computationally feasible to find globally optimal correspondences minimizing both shape context matching cost and spatial distortion. These correspondences are then used to iteratively warp the unknown images into alignment with the known template images.

The aim of this deformable template matching is to transform the fish bodies in the input images so that comparing their appearance pixel-wise with the templates will be as accurate as possible. The final stage of the system uses support vector machines (SVMs) to classify the warped input images based on their textures. The output is a count of the number of fish of each species appearing in the video. Our goal is to achieve nearly as good counting and classification as a human analyst, but to require less time and effort.

Publications

|

| Vision and Media Lab, Simon Fraser University TASC 8000 and 8002, 8888 University Drive, Burnaby, BC, V5A 1S6, Canada |