Greg Mori(1,2) and Jitendra Malik (1)

(1) UC Berkeley Computer Vision Group

(2) Simon Fraser University

Greg Mori(1,2) and Jitendra Malik (1)

|  |

This is the homepage of the Shape Contexts based approach to break Gimpy, the CAPTCHA test used at Yahoo! to screen out bots. Our method can successfully pass that test 92% of the time. The approach we take uses general purpose algorithms that have been designed for generic object recognition. The same basic ideas have been applied to finding people in images, matching handwritten digits, and recognizing 3D objects.

A CAPTCHA is a program that can generate and grade tests that:

EZ-Gimpy and Gimpy, the CAPTCHAs that we have broken, are examples of word-based CAPTCHAs. In EZ-Gimpy, the CATPCHA used by Yahoo! (shown in the figure above), the user is presented with an image of a single word. This image has been distorted, and a cluttered, textured background has been added. The distortion and clutter is sufficient to confuse current OCR (optical character recognition) software. However, using our computer vision techniques we are able to correctly identify the word 92% of the time.

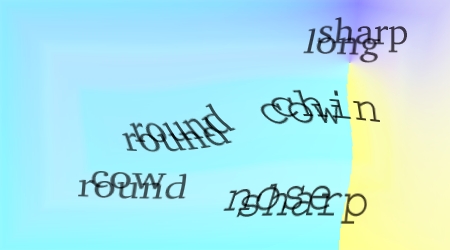

Gimpy is a more difficult variant of a word-based CAPTCHA. Ten words are presented in distortion and clutter similar to EZ-Gimpy. The words are also overlapped, providing a CAPTCHA test that can be challenging for humans in some cases. The user is required to name 3 of the 10 words in the image in order to pass the test. Our algorithm can pass this more difficult test 33% of the time.

The fundamental ideas behind our approach

to solving Gimpy are the same as those we are using to solve generic

object recognition problems. Our solution to the Gimpy CAPTCHA is

just an application of a general framework that we have used to

compare images of everyday objects and even find and track people in

video sequences. The essences of these problems are similar. Finding

the letters "T", "A", "M", "E" in an image and connecting them to read

the word "TAME" is akin to finding hands, feet, elbows, and faces and

connecting them up to find a human. Real images of people and objects

contain large amounts of clutter. Learning to deal with the

adversarial clutter present in Gimpy has helped us in understanding

generic object recognition problems.

Our related work on finding people and generic

objects.

A high-level description of our method can be found here.

If you would like more details, see our paper from CVPR 2003.

Below are a few examples of images analyzed using our method, and the word that was found. Correct words are shown in green, incorrect words in red. For EZ-Gimpy we did experiments using 191 images. We were able to correctly identify the word in 176 of these images: a success rate of 92%! Our algorithm takes only a few seconds to process one image. If your would like to see our results on all 191 images, please click here.

|  |

|  |

|  |

|  |

|  |

|  |

|  |

Gimpy

The more difficult version of the Gimpy CAPTCHA presents an image such as the one shown below. There are 10 words (some repeated), overlaid in pairs. The test-taker is required to list 3 of the words present in the image in order to pass.

The clutter in these images, real words instead of random background textures, is much more difficult to deal with. In addition, we must find 3 words instead of just one. Our current algorithm can find 3 correct words and pass this Gimpy test 33% of the time. Note that even if we could guess a single word correctly 70% of the time, we would only expect to get 3 words correct approximately 0.7*0.7*0.7 = 34% of the time. Moreover, given our 33% success rate, this CATPCHA would still be ineffective at filtering out "bots" since they can bombard a program with thousands of requests.

The algorithm we use is outlined in detail in our paper linked above. Check out our results on the the harder version of Gimpy.